Lakera Guard

Premium

Unlocking Secure AI: A Deep Dive into Lakera Guard for Enterprise LLM Protection

As enterprises increasingly integrate Large Language Models (LLMs) into their applications, the need for robust security and responsible AI practices has never been more critical. The dynamic and generative nature of LLMs introduces a new frontier of vulnerabilities, from sophisticated prompt injections to unintended data leakage and the generation of harmful content. Enter Lakera Guard, an AI security platform designed to act as a real-time shield for your LLM applications. Located at lakera.ai, Lakera Guard positions itself as a developer-first solution to proactively detect and mitigate a wide array of LLM-specific threats.

This comprehensive SEO review will explore Lakera Guard's core features, weigh its advantages and disadvantages, and benchmark it against other prominent AI security tools in the market, helping you determine if it's the right fit for your organization's AI journey.

Deep Features Analysis: The Lakera Guard Arsenal

Lakera Guard provides a multi-layered defense strategy, integrating seamlessly into your existing AI architecture. Its feature set is built around real-time threat detection and prevention, offering a significant upgrade to generic content moderation tools.

- Real-time Prompt Injection Detection: This is arguably one of Lakera Guard's most critical features. It actively identifies and blocks malicious inputs designed to bypass system instructions, extract sensitive data, or manipulate LLM behavior. This includes direct prompt injections, indirect injections, and sophisticated jailbreaking attempts, ensuring the integrity and intended behavior of your LLMs.

- Sensitive Data Leakage Prevention (PII/PHI Redaction): Lakera Guard is adept at identifying and redacting Personally Identifiable Information (PII) and Protected Health Information (PHI) from both user inputs and LLM outputs. This crucial capability helps organizations maintain compliance with regulations like GDPR, HIPAA, and CCPA, preventing inadvertent exposure of sensitive user data.

- Harmful Content & Abuse Detection: Beyond basic profanity filters, Lakera Guard employs advanced AI models to detect and block a wide spectrum of harmful content, including hate speech, harassment, violent threats, sexual content, and illegal activities. This protects your users from toxic interactions and safeguards your brand reputation.

- Security for RAG Applications: Retrieval Augmented Generation (RAG) models, while powerful, introduce new security considerations, especially concerning the quality and safety of retrieved external data. Lakera Guard extends its protection to RAG applications, ensuring the integrity of the retrieved context and preventing the LLM from being poisoned by malicious or biased external information.

- Developer-First APIs & SDKs: Lakera Guard emphasizes ease of integration. It offers well-documented APIs and SDKs that allow developers to quickly incorporate its security capabilities into their existing applications, whether they are using OpenAI, Anthropic, Hugging Face, or other LLM providers. This low-friction integration is key for rapid deployment and iteration.

- Customizable Policies & Rules: While offering out-of-the-box protection, Lakera Guard also allows enterprises to define and customize their own security policies and rules. This flexibility enables organizations to tailor the guardrails to their specific use cases, industry regulations, and risk tolerance.

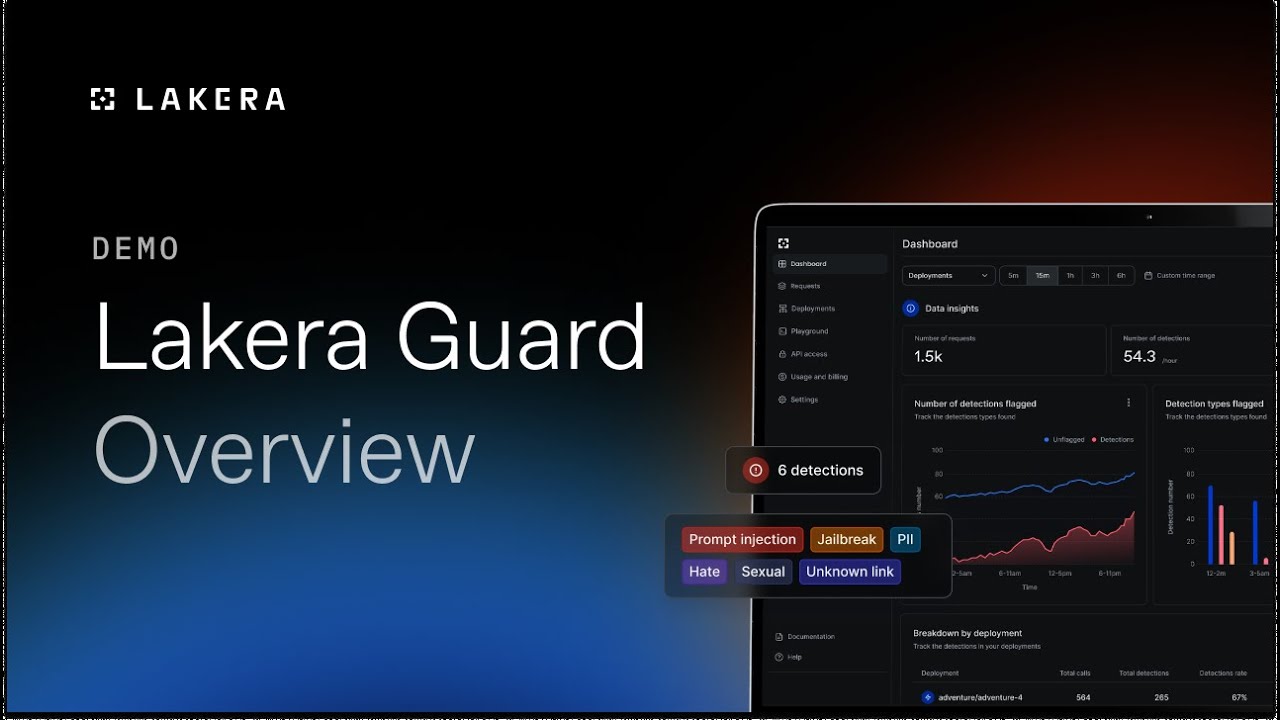

- Comprehensive Dashboard & Analytics: A user-friendly dashboard provides visibility into detected threats, blocked prompts, and overall LLM security posture. This includes detailed logs, analytics on attack types, and performance metrics, allowing security teams to monitor, analyze, and refine their defense strategies effectively.

- Scalability & Performance: Designed for enterprise use, Lakera Guard is built to handle high volumes of API calls without introducing significant latency, ensuring that security measures don't impede the performance or user experience of your LLM applications.

Pros and Cons of Lakera Guard

Evaluating any security tool requires a balanced perspective. Here's a look at Lakera Guard's strengths and potential drawbacks:

Pros:

- Comprehensive Real-time Protection: Offers a wide array of protections (prompt injection, data leakage, harmful content) in real-time, which is crucial for dynamic LLM interactions.

- Strong Focus on Prompt Injection: Excels in detecting sophisticated prompt injection and jailbreaking attempts, a major vulnerability for many LLM applications.

- Data Privacy & Compliance: Robust PII/PHI redaction capabilities are vital for organizations operating under strict data privacy regulations.

- Developer-Friendly Integration: APIs and SDKs simplify the process of embedding security into existing workflows, accelerating deployment.

- Enhanced RAG Security: Addresses a growing concern by providing specific security measures for Retrieval Augmented Generation architectures.

- Customization: Policy customization allows tailoring security to specific business needs and risk profiles.

- Visibility & Reporting: The dashboard provides critical insights into security events and LLM usage patterns.

Cons:

- Potential Latency Overhead: While designed for performance, introducing an additional layer (a proxy or API call) can inherently add some degree of latency, which might be a consideration for extremely low-latency applications.

- Cost Implications: As a specialized enterprise-grade solution, Lakera Guard will likely come with a significant cost, which might be a barrier for smaller businesses or startups with limited budgets.

- Integration Complexity for Legacy Systems: While developer-friendly, integrating with highly complex or legacy enterprise systems might still require dedicated effort.

- Dependency on a Third-Party: Organizations become reliant on Lakera for their LLM security posture, requiring trust in their ongoing development and maintenance.

- Evolving Threat Landscape: The nature of LLM threats is constantly evolving. Lakera Guard, like any security solution, must continuously adapt, requiring vigilance from both the vendor and the user.

Comparison and Alternatives: Benchmarking Lakera Guard

The LLM security landscape is rapidly evolving, with several players offering solutions to address various facets of AI safety. Here's how Lakera Guard stacks up against some notable alternatives:

1. NVIDIA NeMo Guardrails

- What it is: NeMo Guardrails is an open-source toolkit developed by NVIDIA for programming "guardrails" for conversational AI. It allows developers to define rules, topics, and actions to control LLM behavior, prevent specific responses, and connect LLMs to external systems.

- Comparison with Lakera Guard:

- Approach: NeMo Guardrails is a programmatic, rule-based approach where developers explicitly define the boundaries and behaviors. Lakera Guard is more of a real-time, AI-powered detection and prevention gateway that acts as an inline shield.

- Flexibility vs. Ease of Use: NeMo Guardrails offers immense flexibility and deep control for developers who want to intricately define LLM interactions. Lakera Guard offers more out-of-the-box, comprehensive protection with less initial configuration effort for core threats like prompt injection and data leakage.

- Detection vs. Control: Lakera Guard excels at *detecting* and *blocking* malicious inputs/outputs. NeMo Guardrails focuses more on *controlling* the LLM's flow and topic adherence.

- Deployment: NeMo Guardrails is open-source and self-hosted, giving full control but requiring more operational overhead. Lakera Guard is a managed service, simplifying deployment and maintenance.

2. Garak AI

- What it is: Garak AI focuses on LLM security testing and red teaming. It provides tools and services to identify vulnerabilities in LLM applications, simulating adversarial attacks to uncover prompt injection flaws, data exfiltration risks, and other security weaknesses before deployment.

- Comparison with Lakera Guard:

- Function: Garak AI is primarily a *testing and vulnerability assessment* tool. Lakera Guard is a *real-time detection and prevention* solution.

- Stage of SDLC: Garak AI operates more in the pre-deployment and continuous integration phases (Shift Left Security). Lakera Guard operates primarily in the runtime environment, providing live protection.

- Complementary Use: Garak AI and Lakera Guard can be highly complementary. Garak could be used to discover vulnerabilities, which then informs the configuration and validation of Lakera Guard's real-time defenses.

- Focus: Garak AI is about finding the holes. Lakera Guard is about patching them in real-time.

3. OpenAI Moderation API / Azure AI Content Safety

- What it is: These are built-in content moderation services offered by major LLM providers. They allow developers to classify text for various categories of harmful content (e.g., hate, sexual, self-harm, violence) and receive scores or flags.

- Comparison with Lakera Guard:

- Scope: OpenAI Moderation and Azure AI Content Safety are primarily focused on detecting harmful *content*. Lakera Guard offers a much broader scope, including prompt injection, data leakage (PII/PHI redaction), and more advanced adversarial attack detection.

- Depth of Detection: While good for basic content moderation, these built-in tools often lack the sophistication to detect complex prompt injections or indirect attacks that Lakera Guard is designed to catch.

- Integration: They are native to their respective platforms. Lakera Guard is a vendor-agnostic solution that sits in front of *any* LLM, offering a unified security layer.

- Customization: Lakera Guard offers more granular customization for policies beyond simple content categories.

- Cost: Often included or very low-cost per usage, making them a good starting point, but not a full-fledged enterprise security solution.

Conclusion: Securing the Future of Enterprise AI with Lakera Guard

Lakera Guard emerges as a compelling solution for enterprises serious about securing their LLM applications. Its real-time, multi-faceted approach to detecting and preventing prompt injections, data leaks, and harmful content positions it as a robust guardian for AI deployments. While alternatives like NeMo Guardrails offer deep programmatic control and tools like Garak AI specialize in vulnerability testing, Lakera Guard provides a comprehensive, easy-to-integrate, and proactive defense layer for runtime LLM security.

For organizations prioritizing compliance, data privacy, and brand reputation in their AI endeavors, Lakera Guard offers a powerful blend of advanced threat detection, developer-friendly tooling, and scalability. As the AI landscape continues to evolve, investing in a dedicated LLM security platform like Lakera Guard is not just a best practice, but a strategic imperative to build trustworthy and resilient AI applications.