Kolena Restructured

Premium

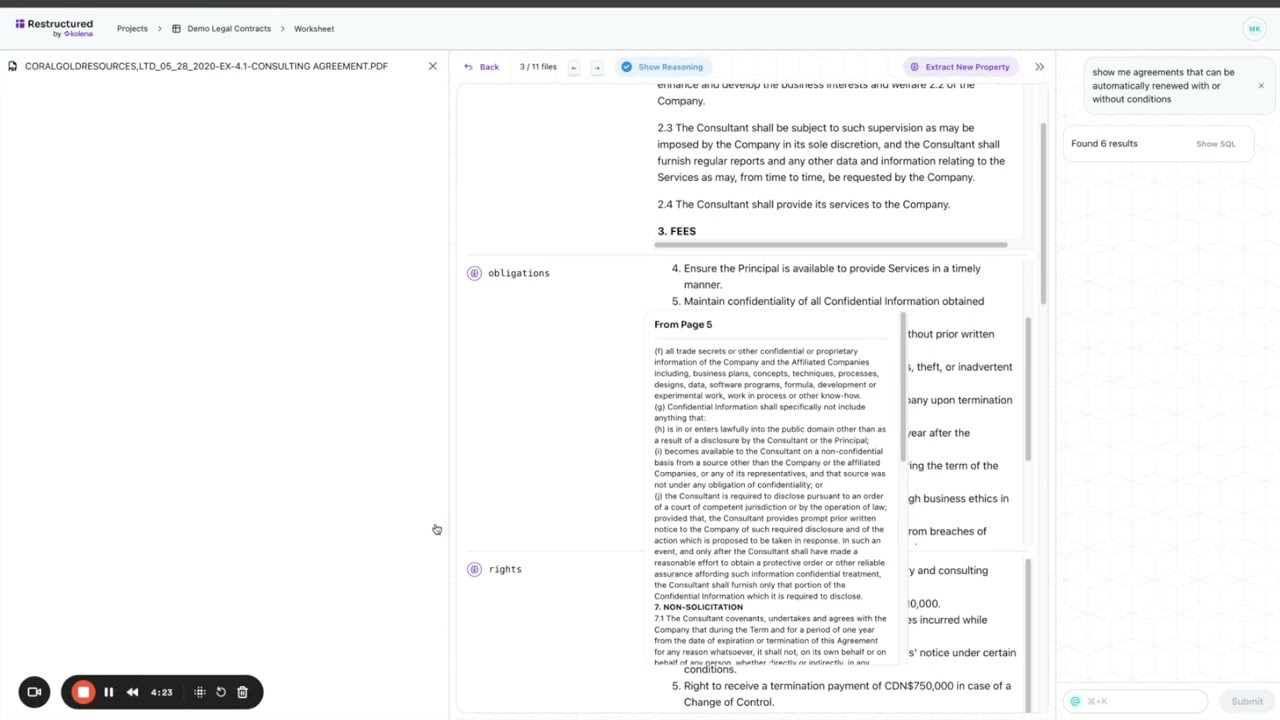

In the rapidly evolving landscape of artificial intelligence and machine learning, the deployment of robust, reliable, and fair models is paramount. As models become more complex and data volumes swell, the need for systematic testing and evaluation tools has never been more critical. Enter Kolena Restructured, an AI tool designed to bring rigorous engineering principles to machine learning model testing and validation. Operating from kolena.com, Kolena aims to empower ML teams to ship high-quality models with confidence by providing a dedicated platform for systematic evaluation, debugging, and collaboration.

This comprehensive SEO review delves deep into Kolena Restructured, exploring its core functionalities, weighing its advantages and disadvantages, and positioning it within the broader ecosystem of AI/ML tools.

Deep Features Analysis: Unpacking Kolena's Capabilities

Kolena Restructured is built on the philosophy that ML models, like traditional software, require comprehensive testing to identify weaknesses, ensure performance, and prevent costly failures in production. It offers a structured approach to model evaluation that goes far beyond simple metric dashboards.

1. Systematic Testing with Test Suites and Pipelines

- Structured Evaluation: Kolena allows users to define explicit test suites and pipelines. A test suite is a collection of tests designed to evaluate a model's performance on specific data slices or scenarios. Pipelines automate the execution of these suites, ensuring consistent and repeatable evaluation across model versions.

- Version Control for Tests: Just like code, test definitions can be versioned, allowing teams to track changes in evaluation criteria and re-run historical tests against new model iterations.

- Pre-defined & Custom Tests: While Kolena supports standard metrics, its strength lies in enabling users to define custom tests tailored to their specific use cases, covering everything from fairness metrics to domain-specific performance checks.

2. Data-Centric Evaluation and Slicing

- Intelligent Data Upload & Tagging: Users can upload their datasets (images, text, audio, tabular) and enrich them with metadata and tags. This tagging is crucial for Kolena's ability to create meaningful data slices.

- Dynamic Data Slicing: Kolena's powerful data slicing capabilities allow teams to isolate and test model performance on specific subsets of data. This could be data from a particular geographic region, specific demographic groups, challenging edge cases, or data points where the model previously failed. This helps uncover hidden biases and performance degradation.

- Failure Analysis & Root Cause Identification: By systematically testing on slices, Kolena helps pinpoint exactly where a model struggles. It's not just about knowing a model performed poorly; it's about understanding *why* and *on what kind of data*.

3. Comprehensive Performance Metrics & Visualization

- Dashboarding & Reporting: Kolena provides intuitive dashboards to visualize model performance across various test suites and data slices. This includes standard metrics (accuracy, precision, recall, F1-score, AUC, etc.) as well as custom metrics defined by the user.

- Historical Performance Tracking: Teams can track how model performance evolves over time and across different versions, making it easy to see if new iterations introduce regressions or improvements.

- Comparative Analysis: The platform supports comparing multiple model versions or different models against the same test suite, enabling informed decisions about which model to deploy.

4. Advanced Debugging Capabilities

- Individual Sample Inspection: Users can drill down into individual data samples to understand model predictions, ground truth, and specific errors. For computer vision, this might involve visualizing bounding boxes, segmentation masks, or keypoints. For NLP, it could be attention maps or token-level predictions.

- Failure Modes Identification: Kolena helps categorize and analyze common failure modes, allowing engineers to prioritize improvements based on the most impactful issues.

- Iterative Improvement Workflow: By providing clear insights into model weaknesses, Kolena facilitates a feedback loop where engineers can retrain models, then re-evaluate them on the same problematic data slices to confirm fixes.

5. Collaboration & MLOps Integration

- Team Collaboration: Kolena is designed for teams, enabling multiple engineers and stakeholders to work together on model evaluation, share insights, and track progress.

- API-First Approach: With a robust API, Kolena can seamlessly integrate into existing MLOps pipelines, automating the testing phase as part of CI/CD for ML. This means tests can be triggered automatically upon new model commits or deployments.

- Integration Ecosystem: While specific integrations depend on the current offerings, Kolena aims to be compatible with popular ML frameworks (PyTorch, TensorFlow) and other MLOps tools.

Pros of Kolena Restructured

- Enhanced Model Reliability: By systematically identifying and addressing weaknesses, Kolena significantly boosts the reliability and robustness of deployed ML models.

- Reduced Time to Production: Confidently shipping models faster by streamlining the testing and validation phase, reducing guesswork and ad-hoc testing.

- Data-Centric Debugging: Moves beyond aggregate metrics to provide granular insights into model failures, allowing for targeted data collection and model retraining efforts.

- Improved Collaboration: Provides a centralized platform for ML teams to align on evaluation criteria, share findings, and collectively improve model quality.

- Scalability for Enterprise: Designed to handle complex models, large datasets, and diverse teams, making it suitable for enterprise-level ML operations.

- Focus on Edge Cases & Fairness: Its data-slicing capabilities are invaluable for uncovering performance issues related to underrepresented groups, rare scenarios, or biased data.

- Auditability and Compliance: Detailed test reports and historical performance tracking provide an auditable trail of model validation, crucial for regulatory compliance.

Cons of Kolena Restructured

- Learning Curve: Adopting a new, systematic testing paradigm can require an initial investment in learning the platform and rethinking existing evaluation workflows.

- Initial Setup & Integration Effort: While offering powerful integrations, the initial setup to integrate Kolena into existing MLOps pipelines and data sources can require engineering effort.

- Cost: As an enterprise-grade solution, Kolena is likely to have a significant cost associated with it, potentially making it less accessible for small teams or individual researchers with limited budgets (unless specific free tiers are available).

- Dependency on Data Quality & Tagging: The effectiveness of Kolena's data-centric approach heavily relies on the quality and richness of the metadata and tags provided with the datasets. Poor tagging can limit its debugging potential.

- Potential Overkill for Simple Projects: For very small, simple ML models with straightforward evaluation needs, Kolena's comprehensive features might be more than what's necessary, potentially adding unnecessary complexity.

Comparison and Alternatives

Kolena operates within the burgeoning MLOps ecosystem but occupies a specific niche focused on systematic model testing and evaluation. While many tools touch upon aspects of ML lifecycle management, Kolena's dedication to robust, pre-deployment validation sets it apart. Let's compare it with three other prominent tools:

1. MLflow (Open-Source MLOps Platform)

- What it is: MLflow is an open-source platform for managing the end-to-end machine learning lifecycle, encompassing experiment tracking, reproducible runs, model packaging, and model registry.

- Comparison with Kolena:

- Focus: MLflow provides a broad set of tools for tracking experiments (parameters, metrics, code), versioning models, and deploying them. It's a general-purpose MLOps tool. Kolena, conversely, has a razor-sharp focus on the *testing, evaluation, and debugging* phase of ML models.

- Strength: MLflow excels at giving you a holistic view of your ML development process and managing model lifecycle. Kolena excels at deep-diving into model quality, finding failure modes, and ensuring robustness *before* deployment.

- Synergy: These tools are highly complementary. MLflow can track the models generated by various experiments, while Kolena can then take those models and put them through rigorous, systematic testing defined in test suites, providing detailed evaluation results that can even be logged back into MLflow.

2. Arize AI (ML Observability Platform)

- What it is: Arize AI is an ML observability platform that helps teams monitor, troubleshoot, and explain models in production. It focuses heavily on model drift detection, data quality issues, and performance degradation post-deployment.

- Comparison with Kolena:

- Stage of Focus: Arize is primarily a *production* monitoring tool. Its strength lies in catching issues once a model is live and identifying why performance might be degrading over time due to real-world data shifts. Kolena is predominantly a *pre-deployment* testing and debugging tool, ensuring model quality *before* it ever reaches production.

- Goal: Arize aims to provide alerts and root cause analysis for live models. Kolena aims to prevent those issues from ever reaching production by rigorous upfront testing.

- Overlap: There's some conceptual overlap in "root cause analysis" and "failure identification," but Arize does it for production inference data, while Kolena does it for held-out test data in development/staging. Both are critical for robust ML.

3. Weights & Biases (W&B) (ML Development Platform)

- What it is: Weights & Biases is a popular platform for experiment tracking, model visualization, hyperparameter optimization, and dataset versioning, primarily used during the model development and training phases.

- Comparison with Kolena:

- Primary Use: W&B is excellent for iterating on models, comparing different training runs, visualizing gradients, and understanding the training process. It provides rich dashboards for metrics collected during training. Kolena, while also providing dashboards, focuses specifically on defining and running structured *tests* on *trained* models against curated data splits to uncover specific failure modes.

- Evaluation Depth: W&B can show you aggregated metrics over a validation set during training. Kolena goes a step further by allowing you to define complex test suites, systematically slice data, and drill down into individual sample failures post-training, aiming for a much deeper and more structured evaluation for deployment readiness.

- Collaboration: Both offer strong collaboration features, but W&B leans more into collaborative experiment tracking, while Kolena emphasizes collaborative testing and debugging.

In essence, Kolena carves out a niche by focusing on systematic, data-centric model testing that complements broader MLOps platforms like MLflow and W&B, and acts as a crucial preventative measure before engaging with production monitoring tools like Arize AI.

Conclusion

Kolena Restructured represents a significant advancement in the methodology of machine learning development. By bringing a disciplined, engineering-first approach to model testing and validation, it addresses a critical pain point for ML teams: the lack of systematic methods to ensure model quality and reliability prior to deployment. Its powerful data-slicing capabilities, systematic test suites, and in-depth debugging tools empower teams to understand their models' weaknesses with unprecedented clarity.

While requiring an initial investment in adoption and integration, the long-term benefits of Kolena—reduced deployment risks, faster iteration cycles, and higher-quality AI products—make it an invaluable tool for any organization serious about operationalizing robust and responsible AI. Kolena is not just another monitoring tool; it's a foundational platform for building confidence in your AI systems, ensuring they perform as expected, even in the most challenging real-world scenarios.